Learning Object Grasping for Soft Robot Hands

PEOPLE

ABSTRACT

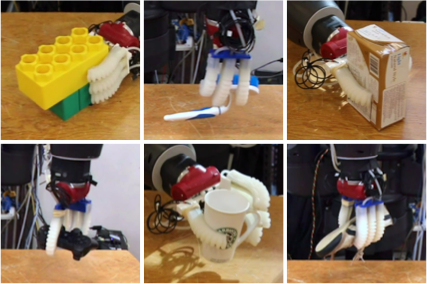

We present a 3D deep convolutional neural network (3D CNN) approach for grasping unknown objects with soft hands. Soft hands are compliant and capable of handling uncertainty in sensing and actuation, but come at the cost of unpredictable deformation of the soft fingers. Traditional model-driven grasping approaches, which assume known models for objects, robotic hands, and stable grasps with expected contacts, are inapplicable to such soft hands, since predicting contact points between objects and soft hands is not straightforward. Our solution adopts a deep CNN approach to find good caging grasps for previously unseen objects by learning effective features and a classifier from point cloud data. Unlike recent CNN models applied to robotic grasping which have been trained on 2D or 2.5D images and limited to a fixed top grasping direction, we exploit the power of a 3D CNN model to estimate suitable grasp poses from multiple grasping directions (top and side directions) and wrist orientations, which has great potential for geometry-related robotic tasks. Our soft hands guided by the 3D CNN algorithm show 87% successful grasping on previously unseen objects. A set of comparative evaluations shows the robustness of our approach with respect to noise and occlusions.

VIDEOS

PUBLICATIONS

, Wilko Schwarting, Joseph DelPreto, and Daniela Rus, “Learning Object Grasping for Soft Robot Hands”, IEEE Robotics and Automation Letters (RA-L), vol. 3, no. 3, pp. 2370-2377, Jul. 2018. [ pdf | slides | poster ]